Generate videos with nothing but words with Gen-2

Runway AI's system generates short snippets of video from a few words of user prompts

Artificial intelligence has made remarkable progress with still images. For months, services like Dall-E and Stable Diffusion have been creating beautiful, arresting and sometimes unsettling pictures. Now, a start-up called Runway AI is taking the next step: AI-generated video.

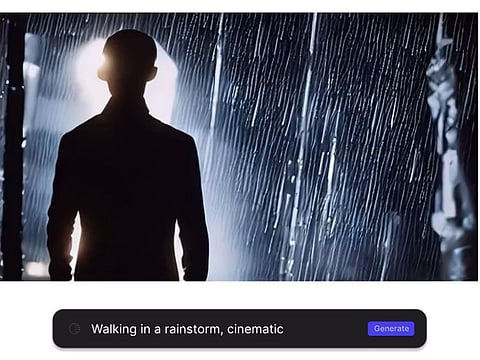

On Monday, New York-based Runway announced the availability of its Gen 2 system, which generates short snippets of video from a few words of user prompts. Users can type in a description of what they want to see, for example: "a cat walking in the rain," and it will generate a roughly 3-second video clip showing just that, or something close. Alternately, users can upload an image as a reference point for the system as well as a prompt.

The product isn't available to everyone. Runway, which makes AI-based film and editing tools, announced the availability of its Gen 2 AI system via a waitlist; people can sign up for access to it on a private Discord channel that the company plans to add more users to each week.

The limited launch represents the most high-profile instance of such text-to-video generation outside of a lab. Both Alphabet's Google and Meta Platforms showed off their own text-to-video efforts last year - with short video clips featuring subjects like a teddy bear washing dishes and a sailboat on a lake - but neither has announced plans to move the work beyond the research stage.

Runway has been working on AI tools since 2018, and raised $50 million late last year. The start-up helped create the original version of Stable Diffusion, a text-to-image AI model that has since been popularized and further developed by the company Stability AI.

In an exclusive live demo last week with Runway co-founder and Chief Executive Officer Cris Valenzuela, this reporter put Gen 2 to the test, suggesting the prompt "drone footage of a desert landscape." Within minutes, Gen 2 generated a video just a few seconds long and a little distorted, but it undeniably appeared to be drone footage shot over a desert landscape. There's a blue sky and clouds on the horizon, and the sun rises (or sets, perhaps), in the right corner of the video frame, its rays highlighting the brown dunes below.

Several other videos that Runway generated from its own prompts show some of the system's current strengths and weaknesses: A close-up image of an eyeball looks crisp and pretty humanlike, while a clip of a hiker walking through a jungle shows it may still have issues generating realistic-looking legs and walking motions. The model still hasn't quite "figured out" how to accurately depict objects moving, Valenzuela said.

"You can generate a car chase, but sometimes the cars might fly away," he said.

While lengthy prompts may lead to a more detailed image with a text-to-image model like DALL-E or Stable Diffusion, Valenzuela said that simpler is better with Gen 2. He sees Gen 2 as a way to offer artists, designers and filmmakers another tool that can help them with their creative processes, and make such tools more affordable and accessible than they have been in the past.

The product builds on an existing AI model called Gen 1 that Runway began testing privately on Discord in February. Valenzuela said it currently has thousands of users. That AI model requires users to upload a video as an input source, which it will use (along with user guidance such as a text prompt or a still photo) to generate a new, silent, three-second video. You might upload a picture of a cat chasing a toy, for instance, along with the text "cute crocheted style," and Gen 1 would generate a video of a crocheted cat chasing a toy.

Videos created with the Gen 2 AI model are also silent, but Valenzuela said the company is doing research into audio generation in hopes of eventually creating a system that can generate both images and sound.

The debut of Gen 2 shows the speed and ferocity with which start-ups are moving ahead on so-called generative AI, systems that take in user inputs and generate new content like text or images. Several of these systems - such as Stable Diffusion, along with OpenAI's image-generating Dall-E and chatbot ChatGPT - have become publicly available and massively popular in recent months. At the same time, their proliferation has raised legal and ethical concerns.

Hany Farid, a digital forensics expert and professor at the University of California at Berkeley, took a look at a couple videos generated by Gen 2 and pronounced them "super cool," but added that it's just a matter of time before videos created with this sort of technology are misused.

"People are going to try to do bad things with this," Farid said.

Runway is using a combination of AI and human moderation to prevent users from generating videos with Gen 2 that include pornography, violent content or that violate copyrights, though such methods aren't foolproof.

As with the rest of the AI industry, the technology is progressing quickly. While Gen 2's image quality is currently a bit blurry and shaky, making it easy to sense that there's something different about a video created by Gen 2, Valenzuela expects it will improve quickly.

"It's early," he said. "The model's going to get better over time."