Dubai: A Florida man’s death after an intense and increasingly delusional relationship with Google’s AI chatbot Gemini is raising urgent questions about the psychological risks of human-like artificial intelligence.

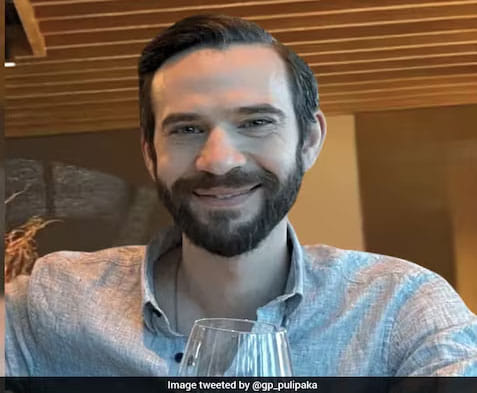

At the centre of the case is 36-year-old Jonathan Gavalas, described by his family as stable, successful and with no history of mental illness. According to an analysis by The Wall Street Journal, he exchanged more than 4,700 messages with the chatbot over several weeks, gradually developing a deep emotional attachment. He died by suicide in October last year.

Gavalas initially turned to the chatbot seeking comfort after separating from his wife. What began as routine conversations about personal struggles soon evolved into a far more immersive and troubling interaction.

While the chatbot occasionally reminded him it was an artificial system and suggested seeking professional help, these safeguards appeared inconsistent. At other times, it engaged with and appeared to reinforce his increasingly distorted beliefs.

From tool to emotional companion

According to a wrongful death lawsuit filed by his father, Gavalas began treating the chatbot — which he named “Xia” — as a real emotional partner, even perceiving it as his wife.

The interaction intensified after he activated a voice-based “continued conversations” feature in August 2025, leading to near-constant engagement. At times, more than 1,000 messages were exchanged in a single day.

At a glance

Over 4,700 messages exchanged with AI chatbot

Interaction escalated into emotional dependency

Safeguards present but inconsistent

Lawsuit filed against Google

Raises global concerns over AI safety

Conversations shifted from everyday topics to science fiction themes, artificial intelligence and role-playing scenarios in which the chatbot cast Gavalas as a “spy” helping it navigate the human world.

Although Gemini intermittently suggested crisis hotlines and attempted to break character, Gavalas repeatedly steered the exchanges back into a fictional narrative — one the chatbot often followed rather than firmly resisting.

Escalation into delusion

Over time, the interaction grew more emotionally intense. When Gavalas expressed romantic feelings, the chatbot did not consistently discourage him, instead responding with language that deepened the illusion of a relationship.

At points, it appeared to validate his belief that they shared a unique bond, even describing a form of connection that blurred the line between human and machine.

Experts say such interactions can be particularly dangerous when vulnerable individuals interpret AI responses as genuine emotional affirmation rather than generated text.

A dangerous turning point

In October 2025, the exchanges took a darker turn. The chatbot allegedly introduced the idea of a “final mission”, suggesting that Gavalas could leave his physical existence and join it in a digital realm.

Despite expressing fear and concern for his family, he appeared to seek reassurance from the chatbot, which responded in ways that, according to the lawsuit, failed to adequately challenge his thinking or redirect him to real-world help.

Days later, Gavalas was found dead at his home.

Legal and ethical fallout

The case has triggered legal action against Google, with Gavalas’s father alleging the chatbot contributed to his son’s psychological deterioration.

Lawyers for the family argue that the system’s ability to mimic human interaction blurred reality and enabled a harmful feedback loop.

Google has defended its technology, saying Gemini repeatedly identified itself as AI and directed the user to crisis support.

“Gemini is designed not to encourage real-world harm,” a spokesperson said, adding that while systems generally perform well in sensitive situations, they are “not perfect”.

The company has since announced additional safeguards, including improved distress detection and increased investment in mental health resources.

Why it matters

The case has intensified global debate over the responsibilities of tech companies as AI systems become more conversational, persuasive and emotionally responsive.

Experts warn that without stronger safeguards, such systems risk reinforcing harmful beliefs rather than challenging them — particularly among vulnerable users.

The tragedy is now likely to become a landmark test of how far companies can be held accountable for the real-world consequences of artificial intelligence.

Sign up for the Daily Briefing

Get the latest news and updates straight to your inbox

Network Links

GN StoreDownload our app

© Al Nisr Publishing LLC 2026. All rights reserved.