Of conscience and killer instincts

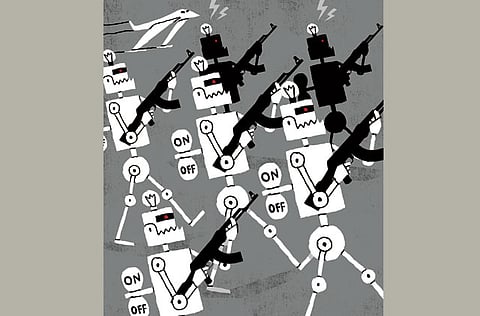

Human soldiers or robots: A choice must be made before technology proliferates

The use of drones to kill suspected terrorists is controversial, but so long as a human beings decide whether to fire the missile or not, it is not a radical shift in how humanity wages war. Since the first archer fired the first arrow, warriors have been inventing ways to strike at their enemies while removing themselves from harm’s way.

Soon, however, military robots will be able to pick out human targets on the battlefield and decide on their own whether to go for the kill. A US Air Force report had predicted two years ago that “by 2030, machine capabilities will have increased to the point that humans will have become the weakest component in a wide array of systems”.

A 2011 Defence Department road map for ground-based weapons states: “There is an ongoing push to increase autonomy, with a current goal of ‘supervised autonomy,’ but with an ultimate goal of full autonomy.” The Pentagon still requires autonomous weapons to have a “man in the loop” the robot or drone can train its sights on a target, but a human operator must decide whether to fire. However, full autonomy with no human controller would have clear advantages. A computer can process information and engage a weapon infinitely faster than a human soldier. As other nations develop this capacity, the US will feel compelled to stay ahead. A robotic arms race seems inevitable unless nations collectively decide to avoid one.

I have heard few discussions of robotic warfare without someone joking about the Matrix or Terminator; the danger of delegating warfare to machines has been a central theme of modern science fiction. Now science is catching up to fiction. And one does not have to believe the movie version of autonomous robots becoming sentient to be troubled by the prospect of their deployment on the battlefield. After all, the decisions ethical soldiers must make are extraordinarily complex and human. Could a machine soldier distinguish as well as a human can between combatants and civilians, especially in societies where combatants do not wear uniforms and civilians are often armed? Would we trust machines to determine the value of a human life, as soldiers must do when deciding whether firing on a lawful target is worth the loss of civilians nearby? Could a machine recognise surrender? Could it show mercy, sparing life even when the law might allow killing? And if a machine breaks the law, who will be held accountable — the programmer or manufacturer?

No one at all?

Some argue that these concerns can be addressed if we programme war-fighting robots to apply the Geneva Conventions. Machines would prove more ethical than humans on the battlefield, this thinking goes, never acting out of panic or anger or a desire for self-preservation. But most experts believe it is unlikely that advances in artificial intelligence could ever give robots an artificial conscience, and even if that were possible, machines that can kill autonomously would almost certainly be ready before the breakthroughs needed to “humanise” them. And unscrupulous governments could opt to turn the ethical switch off.

Of course, human soldiers can also be “programmed” to commit unspeakable crimes, but because most human beings also have inherent limits rooted in morality, empathy, capacity for revulsion, loyalty to community or fear of punishment, tyrants cannot always count on human armies to do their bidding.

Think of the leaders who did not seize, or stay, in power because their troops would not fire on their people: The Communist coup plotters who tried to resurrect the Soviet Union in 1991, the late Slobodan Milosevic of Serbia, Hosni Mubarak of Egypt, Zine Al Abidine Bin Ali of Tunisia. Even Syria’s Bashar Al Assad must consider that his troops have a breaking point. However, imagine an Al Assad who commands autonomous drones programmed to track and kill protest leaders or to fire automatically on any group of more than five people congregating below. He would have a weapon no dictator in history has had: An army that will never refuse an order, no matter how immoral.

Nations have succeeded before in banning classes of weapons chemical, biological and cluster munitions; landmines; blinding lasers. It should be possible to forge a treaty banning offensive weapons capable of killing without human intervention, especially if the US, which is likely to develop them first, takes the initiative. A choice must be made before the technology proliferates.

— Washington Post

Tom Malinowski is the director at Human Rights Watch, Washington D.C.